Kafka & AI-Driven Event Streaming

Kafka

Event streaming platform

Real-time data processing

Scalable message broker

Fault-tolerant storage

Pub-sub & message queuing

High throughput, low latency

Apache Kafka is a leading distributed event-streaming platform designed to help organizations build scalable, real-time data pipelines and event-driven architectures. As a high-throughput and fault-tolerant messaging system, Kafka empowers enterprises to process, analyze, and act on data streams with speed and reliability, enhanced with AI-driven real-time insights and intelligent processing.

⬤ Why Kafka?

Kafka enables seamless data flow across modern digital ecosystems and serves as a foundation for AI-powered real-time analytics and decision-making. Its robust architecture supports continuous data ingestion and ensures durability through distributed logs, making it ideal for applications requiring instant insights and intelligent responses.

⬤ Key Capabilities

High-Throughput Event Streaming

Efficiently handles millions of events per second, enabling real-time processing and AI-based stream analytics at scale.Scalable & Distributed Architecture

Built to grow with your business, with clusters that support AI-driven workload optimization and adaptive scaling.Reliable & Durable Messaging

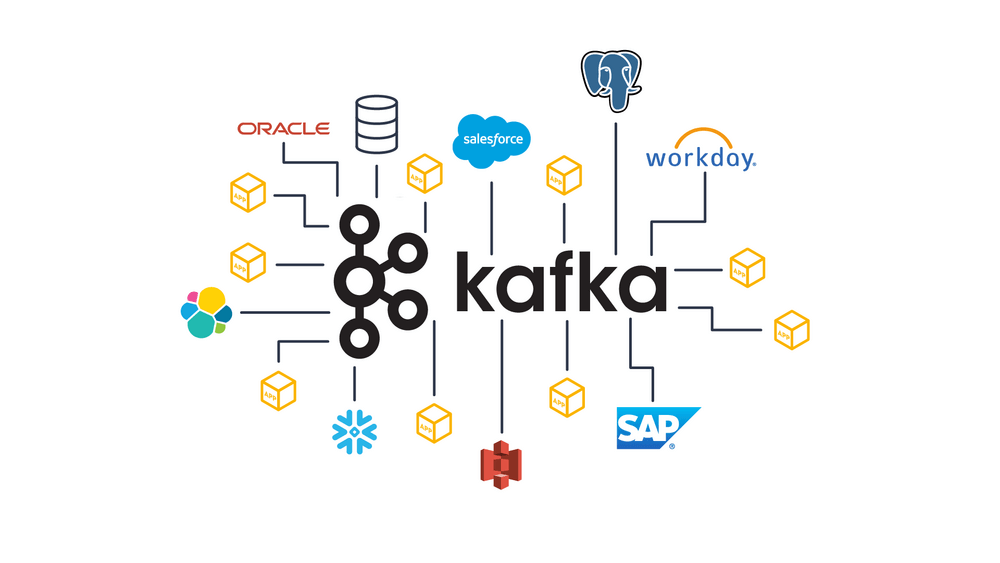

Ensures fault-tolerant storage for consistent and reliable data feeding into AI/ML systems.Flexible Integration

Integrates with Apache Spark, Apache Flink, Elasticsearch, and cloud services, including AI/ML pipelines and data platforms.Real-Time Analytics & Processing

Powers applications such as fraud detection, IoT pipelines, monitoring, and AI-driven behavior analysis and predictions.

⬤ Business Use Cases

Event-Driven Microservices

Enables loosely coupled services enhanced with AI-powered decision-making systems.Data Pipeline Modernization

Acts as a hub for streaming ETL and AI-enabled data processing pipelines.IoT Streaming & Telemetry

Supports large-scale device data with real-time AI-based anomaly detection.Log Aggregation & Monitoring

Consolidates logs with AI-driven observability and predictive insights.

⬤ How We Help

As a technology consulting partner, we design and optimize Kafka solutions enhanced with AI-driven streaming architectures and intelligent data pipelines.

Our services include:

- Architecture design & platform implementation

- Real-time data pipeline development

- Migration from legacy messaging systems

- Performance tuning & capacity planning

- Managed Kafka services and ongoing support

FAQs

- What is Apache Kafka used for?

Kafka is used for real-time data streaming, log processing, event-driven architectures, and messaging in large-scale applications. - How does Kafka ensure fault tolerance?

Kafka replicates data across multiple brokers, ensuring fault tolerance and data durability, even if a node fails. - What is the difference between Kafka and traditional message queues?

Unlike traditional message queues, Kafka persists data for a configurable time, allowing multiple consumers to read messages independently. - Can Kafka handle large-scale data processing?

Yes, Kafka is designed for high-throughput and low-latency processing, making it suitable for big data and IoT applications. - How does Kafka handle scalability?

Kafka scales horizontally by adding more brokers and partitions, allowing it to handle millions of messages per second efficiently.